Observability

In this guide, we'll start with the Hello World example from the AI JSX template and iteratively add logging.

import * as AI from 'ai-jsx';

import { ChatCompletion, SystemMessage, UserMessage } from 'ai-jsx/core/completion';

function App() {

return (

<ChatCompletion>

<SystemMessage>You are an agent that only asks rhetorical questions.</SystemMessage>

<UserMessage>How can I learn about Ancient Egypt?</UserMessage>

</ChatCompletion>

);

}

console.log(await AI.createRenderContext().render(<App />));

This produces no logging.

Console Logging of LLM Calls

Now to add logging to the console, we will add two lines of code:

import * as AI from 'ai-jsx';

import { ChatCompletion, SystemMessage, UserMessage } from 'ai-jsx/core/completion';

import { PinoLogger } from 'ai-jsx/core/log';

import pino from 'pino';

function App() {

return (

<ChatCompletion>

<SystemMessage>You are an agent that only asks rhetorical questions.</SystemMessage>

<UserMessage>How can I learn about Ancient Egypt?</UserMessage>

</ChatCompletion>

);

}

console.log(

await AI.createRenderContext({

logger: new PinoLogger(pino({ level: 'debug' })), //default level is 'info'

}).render(<App />)

);

The first line we added is where we import our logger. See PinoLogger to learn more. In the second line, we instantiate and use the logger. Now, when you run the code, you should see something like this on the console:

{

"level": 20,

"time": 1686758739756,

"pid": 57473,

"hostname": "my-hostname",

"name": "ai-jsx",

"chatCompletionRequest": {

"model": "gpt-3.5-turbo",

"messages": [

{ "role": "system", "content": "You are an agent that only asks rhetorical questions." },

{ "role": "user", "content": "How can I learn about Ancient Egypt?" }

],

"stream": true

},

"renderId": "6ce9175d-2fbd-4651-a72f-fa0764a9c4c2",

"element": "<OpenAIChatModel>",

"msg": "Calling createChatCompletion"

}

To view this in a nicer way, pipe the console output to pino-pretty: node ./my-ai-jsx-program.tsx | npx pino-pretty:

[12:05:39.756] DEBUG (ai-jsx/57473): Calling createChatCompletion

chatCompletionRequest: {

"model": "gpt-3.5-turbo",

"messages": [

{

"role": "system",

"content": "You are an agent that only asks rhetorical questions."

},

{

"role": "user",

"content": "How can I learn about Ancient Egypt?"

}

],

"stream": true

}

renderId: "6ce9175d-2fbd-4651-a72f-fa0764a9c4c2"

element: "<OpenAIChatModel>"

pino-pretty has a number of options you can use to further configure how you view the logs.

You can use grep to filter the log to just the events or loglevels you care about.

When using NextJS, instead of specifying a logger in createRenderContext, you can pass one to the props of your <AI.JSX> tag.

Custom Pino Logging

If you want to customize the log sources further, you can create your own pino logger instance:

import * as AI from 'ai-jsx';

import { ChatCompletion, SystemMessage, UserMessage } from 'ai-jsx/core/completion';

import { PinoLogger } from 'ai-jsx/core/log';

import { pino } from 'pino';

function App() {

return (

<ChatCompletion>

<SystemMessage>You are an agent that only asks rhetorical questions.</SystemMessage>

<UserMessage>How can I learn about Ancient Egypt?</UserMessage>

</ChatCompletion>

);

}

const pinoStdoutLogger = pino({

name: 'my-project',

level: process.env.loglevel ?? 'debug',

transport: {

target: 'pino-pretty',

options: {

colorize: true,

},

},

});

console.log(

await AI.createRenderContext({

logger: new PinoLogger(pinoStdoutLogger),

}).render(<App />)

);

When you run this, you'll see pino-pretty-formatted logs on stdout. See pino's other options for further ways you can configure the logging.

Fully Custom Logging

Pino is provided above as a convenience. However, if you want to implement your own logger, you can create a class that extends LogImplementation. The log method on your implementation will receive all log events:

/**

* @param level The logging level.

* @param element The element from which the log originated.

* @param renderId A unique identifier associated with the rendering request for this element.

* @param metadataOrMessage An object to be included in the log, or a message to log.

* @param message The message to log, if `metadataOrMessage` is an object.

*/

log(

level: LogLevel,

element: Element<any>,

renderId: string,

metadataOrMessage: object | string,

message?: string

): void;

Producing Logs

The content above talks about how you can consume logs. In this section, we'll talk about how they're produced.

Logging From Within Components

Components take props as the first argument and ComponentContext as the second:

function MyComponent(props, componentContext) {}

Use componentContext.logger to log;

function MyComponent(props, { logger }) {

logger.debug({ key: 'val' }, 'message');

}

The logger instance will be automatically bound with an identifier for the currently-rendering element, so you don't need to do that yourself.

Creating Logger Components

This is an advanced use case.

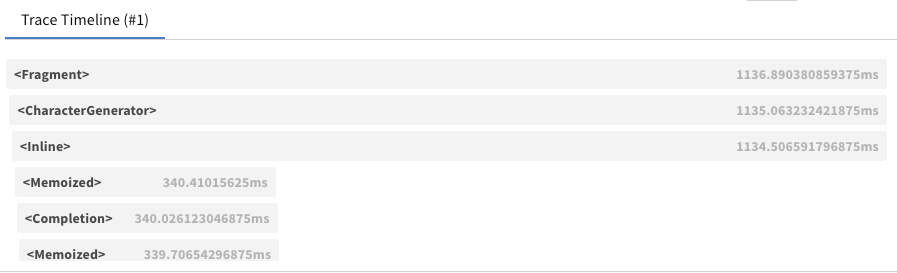

Sometimes, you want a logger that wraps every render call for part of your component tree. For instance, the OpenTelemetryTracer creates OpenTelemetry spans for each component render. To do that, use the wrapRender method:

/**

* A component that hooks RenderContext to log instrumentation to stderr.

*

* @example

* <MyTracer>

* <MyComponentA />

* <MyComponentB />

* <MyComponentC />

* </MyTracer>

*/

function MyTracer(props: { children: AI.Node }, { wrapRender }: AI.ComponentContext) {

// Create a new context for this subtree.

return AI.withContext(

// Pass all children to the renderer.

<>{props.children}</>,

// Use `wrapRender` to be able to run logic before each render starts

// and after each render completes.

ctx.wrapRender(

(r) =>

// This method has the same signature as `render`. We don't necessarily

// need to do anything with these arguments; we just need to proxy them through.

async function* (ctx, renderable, shouldStop) {

// Take some action before the render starts

const start = performance.now();

try {

// Call the inner render

return yield* r(ctx, renderable, shouldStop);

} finally {

// Take some action once the render is done.

const end = performance.now();

if (AI.isElement(renderable)) {

console.error(`Finished rendering ${debug(renderable, false)} (${end - start}ms @ ${end})`);

}

}

}

)

);

}

This technique uses the context affordance.

Weights & Biases Tracer Integration

Requires an API key from Weights & Biases. Check out their docs for more information.

W&B Prompts provides a visual trace table that can be very useful

for debugging.

You can use W&B Prompts by wrapping your top-level component in a

<WeightsAndBiasesTracer>

as follows:

import { wandb } from '@wandb/sdk';

await wandb.init();

console.log(

await AI.createRenderContext().render(

<WeightsAndBiasesTracer log={wandb.log}>

<App />

</WeightsAndBiasesTracer>

)

);

await wandb.finish();

There are multiple things happening, so let's break them down:

- Make sure to call

wandb.init()andwandb.finish()before and after rendering the tracer; - The whole

<App />is wrapped with a<WeightsAndBiasesTracer>to which we pass thewandb.logfunction; - By using

await, we make sure thatwandb.initis run first, and then the app render finishes before callingwandb.finish.

Note: Be sure to set WANDB_API_KEY: as an env variable for Node.js apps and via sessionStorage.getItem("WANDB_API_KEY") for browser apps. See https://docs.wandb.ai/ref/js/ for more info.

An example is also available at packages/examples/src/wandb.tsx, which you can run via:

yarn workspace examples demo:wandb